Does your team / project use code reviews? If not, I suggest starting today. There are countless resources online about how and why to do code reviews; there are numerous code review tools, open source and commercial.

Who should review code? A great question, perhaps for other blog posts.

When should code be reviewed? Hopefully also great question, and the topic of this blog post.

Context

When making a recommendation, I’m trying to get in the habit of always mentioning the context, the situation in my work from which my recommendation arises. This is in response to seeing frustration when people try to follow advice, not realizing that their situation is completely unlike that of the writer.

Here ate Oasis Digital our context is mostly complex line-of-business applications, which are expected to live for a long time and are important to the business operations of the companies that use them. We are almost always replacing an existing system (which a company relies on) or expanding an existing system (which a company relies on).

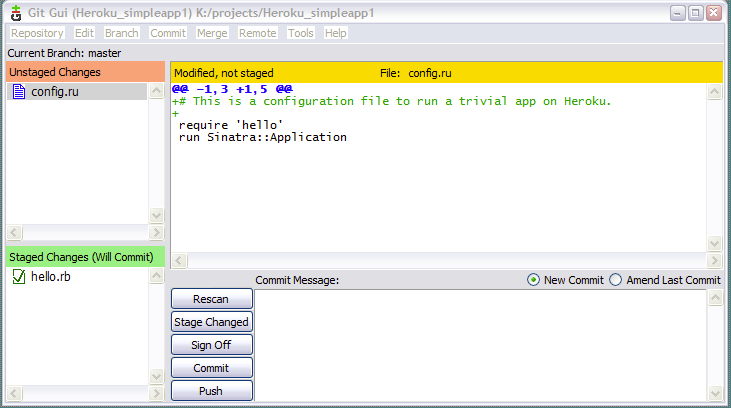

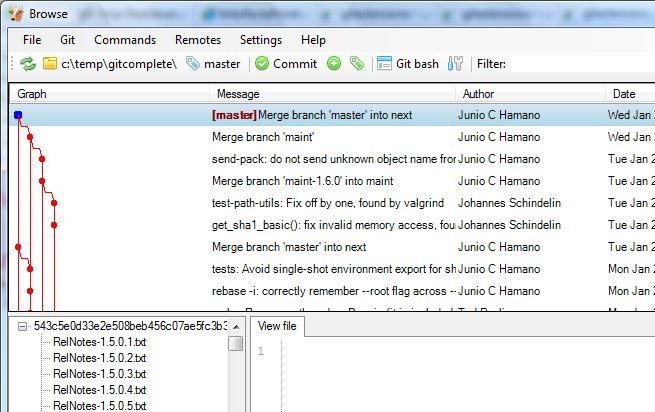

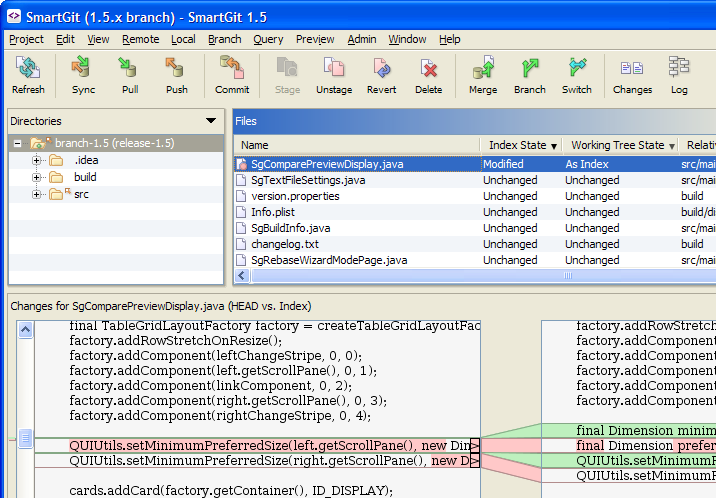

Another critical part of our context: we have flexible and fast source control, and a group of developers who have learned how to use it competently. We have decoupled source control from build automation, so that we can perform builds of branches in progress without merging them to a mainline. We can achieve a sufficient degree of continuous integration without actually putting each change directly into the same code line.

Review Code Early

How long after code has been written should it be reviewed? Hours or days, not weeks. For this reason, we commit and push code frequently (to a disposable branch of course) where others can see it for early review. It can be a challenge to get past the ego-driven desire to make code perfect before exposing it to the scrutiny of others, but it is worthwhile. By exposing code to review and comment early in its life, a developer can get useful feedback early in a unit of work.

Review Code Often

Another anti-pattern of code review is to look at a given proposed change only once or twice. That is sensible for a very small change, but for a complex change to a complex system it is best to perform code review repeatedly as work proceeds. For example, consider a feature that takes four weeks to implement. Our typical approach, which I recommend to others (if their context is reasonably similar to ours, see above) is something like this:

- Review the code a few days in.

- Review the code progress once a week or so.

- Review the code when it is nearly ready to be called “done” and to go in the mainline.

- If there are fixes needed, review those promptly so as not to cause delay.

Review Code Now

Code waiting for review is a drag on the speed of development; the right time to review code is now. A quick review now is probably more valuable than a deep review deferred.

Review Code as a Gate

In some organizations, code review happens asynchronously and sporadically, after code is already part of a project. This risks software quality collapse. Once code is in the mainline of a product, it is arguably too late to review. If a reviewer finds a problem with code already in a system, there will be pressure to sweep that problem under the rug and move on, or to convince your that is not really a problem, or that quality doesn’t really matter, just this once.

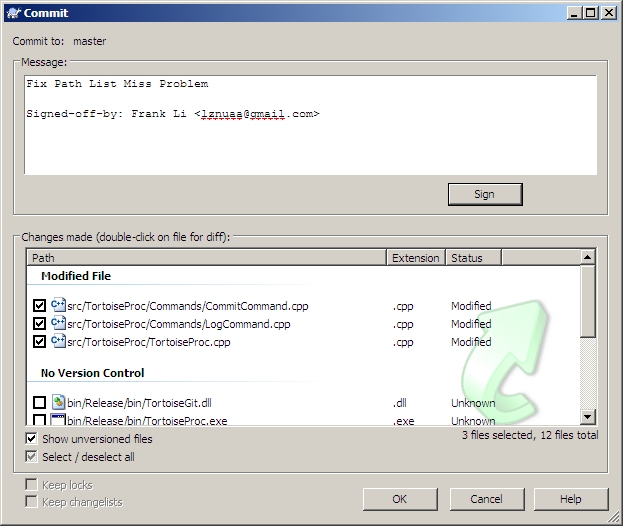

The right answer is simple: use code review as a gate through which code must pass before it can become part of the mainline of the project. Use your source control system, and stack up proposed code changes that are nearly ready to go into the mainline. Then periodically perform your review process (whether it is in person, remote, synchronous, asynchronous, etc.). Once the code passes a final review process, then it goes in the mainline. If there are serious problems, the code sits in a branch, a developer improves it, and it tries again next time.

We have had excellent results with this approach, maintaining software quality for years on end even through ongoing development team turnover.